Introduction to Generative AI Tech Stack

Generative AI has rapidly evolved, transforming industries by enabling machines to generate new content, ideas, and solutions. At the core of this innovation is the Generative AI tech stack, a collection of technologies and methodologies that power these advanced AI systems. In this article, we’ll explore the components of the Generative AI tech stack, how they work together, and their impact on various fields.

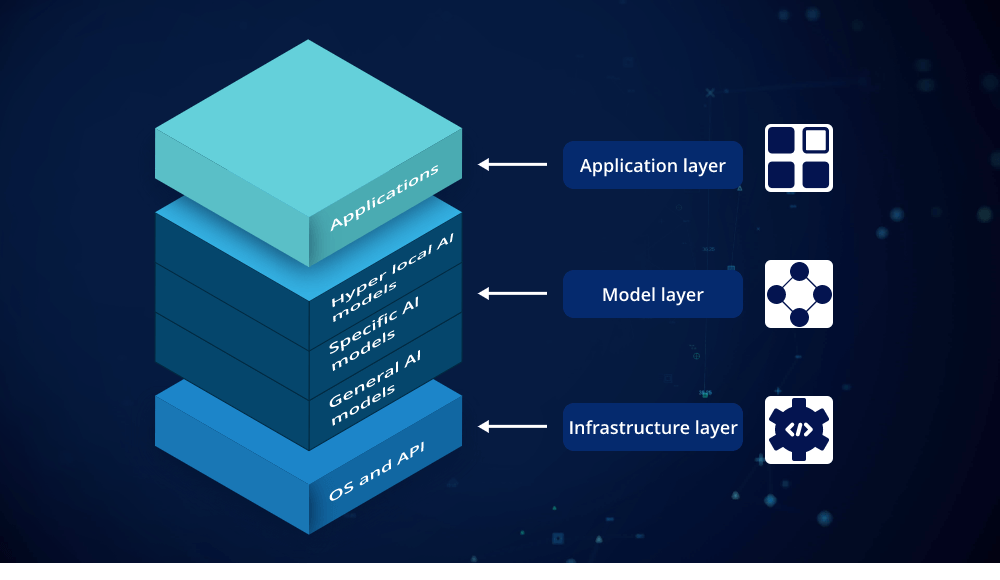

Understanding the Generative AI Tech Stack

The Generative AI tech stack is a layered framework that integrates multiple technologies to create intelligent systems capable of producing new content. Each layer in the tech stack plays a crucial role in the development and deployment of generative AI models. Let’s break down these layers to understand their functions and significance.

1. Data Collection and Preprocessing

The foundation of any effective Generative AI tech stack is high-quality data. Data collection involves gathering diverse datasets that are representative of the tasks the AI will perform. This data can include text, images, audio, and more, depending on the application.

Preprocessing is the next step, where raw data is cleaned and formatted. Techniques such as normalization, tokenization, and data augmentation are applied to make the data suitable for training generative models. Effective data preprocessing ensures that the AI system learns from the most relevant and accurate information.

2. Model Architecture

The core of the Generative AI tech stack is the model architecture. This layer defines how the AI processes data and generates outputs. Key architectures in generative AI include:

- Generative Adversarial Networks (GANs): GANs consist of two networks, a generator and a discriminator, that compete with each other. The generator creates new content, while the discriminator evaluates its authenticity. This adversarial process helps improve the quality of the generated content.

- Variational Autoencoders (VAEs): VAEs are used to generate new data samples by learning a probabilistic model of the input data. They encode data into a latent space and then decode it to generate new samples.

- Transformers: Transformers are a type of neural network architecture that excels in processing sequential data. They are the backbone of many state-of-the-art generative models, including GPT (Generative Pre-trained Transformer) models, which are widely used for text generation.

3. Training and Optimization

Once the model architecture is defined, the next step in the Generative AI tech stack is training. This process involves feeding the preprocessed data into the model and adjusting its parameters to minimize errors. Training algorithms such as gradient descent and backpropagation are used to optimize the model’s performance.

Hyperparameter tuning is also a critical part of this stage. It involves adjusting parameters like learning rate, batch size, and the number of layers to enhance the model’s accuracy and efficiency.

4. Deployment and Integration

After training, the generative model needs to be deployed and integrated into applications. This involves setting up the model in a production environment where it can interact with real-world data and users. Deployment tools and platforms, such as cloud services and containerization technologies, facilitate this process.

Integration with existing systems is also crucial. The generative AI model must work seamlessly with other software and tools used by an organization. APIs and SDKs (Software Development Kits) are commonly used to connect the model with applications, enabling its functionalities to be accessed and utilized effectively.

5. Evaluation and Monitoring

Continuous evaluation and monitoring are essential to ensure the generative model performs well over time. This involves assessing the quality and relevance of the generated content and identifying any issues or biases. Metrics such as precision, recall, and user feedback are used to gauge performance.

Monitoring tools help track the model’s behavior and performance in real-time, allowing for quick adjustments and improvements. Regular updates and retraining may be necessary to keep the model aligned with evolving data and requirements.

Applications of Generative AI Tech Stack

The Generative AI tech stack has a wide range of applications across various domains:

- Content Creation: Generative AI models are used to create high-quality text, images, and videos, revolutionizing content creation in media, marketing, and entertainment.

- Healthcare: In healthcare, generative AI is employed to synthesize medical images, generate drug molecules, and even assist in diagnostics and treatment planning.

- Finance: Financial institutions use generative AI to generate synthetic data for risk assessment, fraud detection, and algorithmic trading.

- Gaming: The gaming industry benefits from generative AI by creating realistic environments, characters, and storylines, enhancing the overall gaming experience.

Conclusion

The Generative AI tech stack is a powerful framework that combines data collection, model architecture, training, deployment, and evaluation to create advanced AI systems capable of generating new and innovative content. Understanding each component and how they interact is crucial for leveraging the full potential of generative AI. As technology continues to evolve, the Generative AI tech stack will play an increasingly important role in shaping the future of various industries.

By familiarizing yourself with the Generative AI tech stack, you can better appreciate the complexities of these systems and their transformative impact on the world. Whether you’re a developer, researcher, or industry professional, mastering this tech stack is essential for harnessing the power of generative AI.

Leave a comment