Introduction to Multimodal Models

In the rapidly evolving field of artificial intelligence (AI), the term “multimodal models” is gaining increasing prominence. These models represent a significant leap forward in how machines understand and process different types of data simultaneously. By integrating information from multiple modalities—such as text, images, and audio—multimodal models offer a more nuanced and comprehensive approach to AI, paving the way for advanced applications across various industries.

What Are Multimodal Models?

Multimodal models are AI systems designed to handle and interpret data from multiple sources or modalities. Unlike traditional models that are optimized for a single type of data, such as text or images, multimodal models combine several types of information to enhance their understanding and performance. For instance, a multimodal model might process both visual and textual data to better understand the context of a scene or conversation.

How Do Multimodal Models Work?

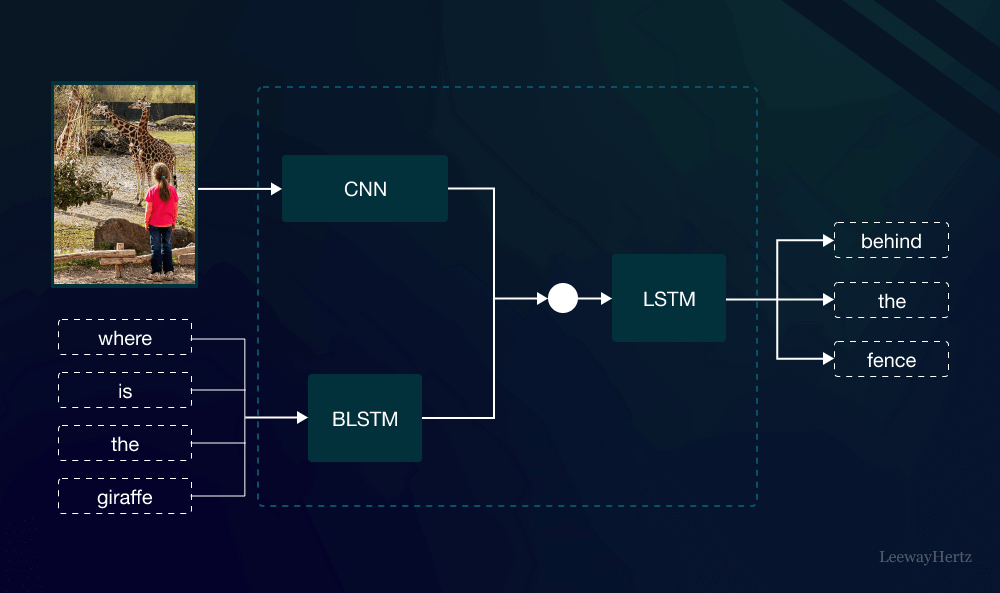

The core of multimodal models lies in their ability to integrate diverse types of data. This is achieved through sophisticated architectures that fuse information from different sources. For example, a common approach involves using separate neural networks to process each modality and then combining their outputs in a unified model. This fusion allows the model to leverage the strengths of each modality, leading to more accurate and contextually aware AI systems.

Applications of Multimodal Models

Multimodal models have a wide range of applications, showcasing their versatility and power. Here are a few prominent examples:

- Healthcare: In medical diagnostics, multimodal models can analyze medical images, patient records, and even speech patterns to provide more accurate diagnoses and treatment plans. This integration helps in creating comprehensive patient profiles that improve the quality of care.

- Autonomous Vehicles: Self-driving cars use multimodal models to process data from cameras, LiDAR sensors, and radar. By combining these data sources, the vehicles can better understand their surroundings and make more informed driving decisions.

- Customer Service: In customer support, multimodal models can analyze text from chat interactions along with voice tone from phone calls. This holistic understanding allows for more empathetic and effective responses, enhancing the overall customer experience.

- Content Creation: For content generation, multimodal models can create compelling multimedia presentations by synthesizing textual descriptions with relevant images or videos. This capability is especially useful in marketing and advertising.

Advantages of Multimodal Models

The integration of multiple data types offers several advantages:

- Enhanced Understanding: By processing information from different modalities, multimodal models can achieve a more comprehensive understanding of context. For example, a model that combines visual and textual data can better interpret the nuances of a scene or narrative.

- Improved Accuracy: Multimodal models often outperform single-modal systems in accuracy. The ability to cross-reference information from various sources helps in reducing errors and increasing the reliability of predictions and analyses.

- Rich Contextual Insights: These models can provide richer insights by integrating diverse forms of data. This leads to more nuanced and contextually relevant outputs, which is particularly beneficial in complex scenarios.

Challenges and Considerations

Despite their advantages, multimodal models come with their own set of challenges:

- Data Integration: Combining data from different modalities can be technically complex. Ensuring that the various types of information are harmoniously integrated requires advanced algorithms and careful design.

- Computational Resources: Processing multiple data types simultaneously demands significant computational power. This can lead to higher costs and resource requirements, particularly for large-scale implementations.

- Data Quality: The effectiveness of multimodal models depends heavily on the quality of the input data. Poor-quality or inconsistent data across modalities can negatively impact the model’s performance.

The Future of Multimodal Models

Looking ahead, the future of multimodal models appears promising. As technology continues to advance, we can expect further improvements in their ability to integrate and interpret diverse data types. Enhanced algorithms and more efficient computing resources will likely address many of the current challenges, making multimodal models even more powerful and accessible.

Conclusion

Multimodal models represent a significant step forward in the field of artificial intelligence. By integrating and processing multiple types of data simultaneously, these models offer a deeper and more accurate understanding of complex scenarios. From healthcare to autonomous vehicles, the applications of multimodal models are vast and varied, promising to transform how we interact with technology. As we continue to develop and refine these models, their potential to revolutionize various industries and improve our daily lives becomes increasingly apparent.

In summary, multimodal models are not just a technological innovation; they are the future of AI integration. By embracing the capabilities of multimodal models, we are unlocking new possibilities and setting the stage for a more interconnected and intelligent world.

Leave a comment