Introduction

In the rapidly evolving world of artificial intelligence (AI), multimodal models are becoming increasingly important. These advanced systems integrate and process multiple types of data—such as text, images, and audio—simultaneously. This article delves into the concept of multimodal models, their significance, and their applications across various domains.

What Are Multimodal Models?

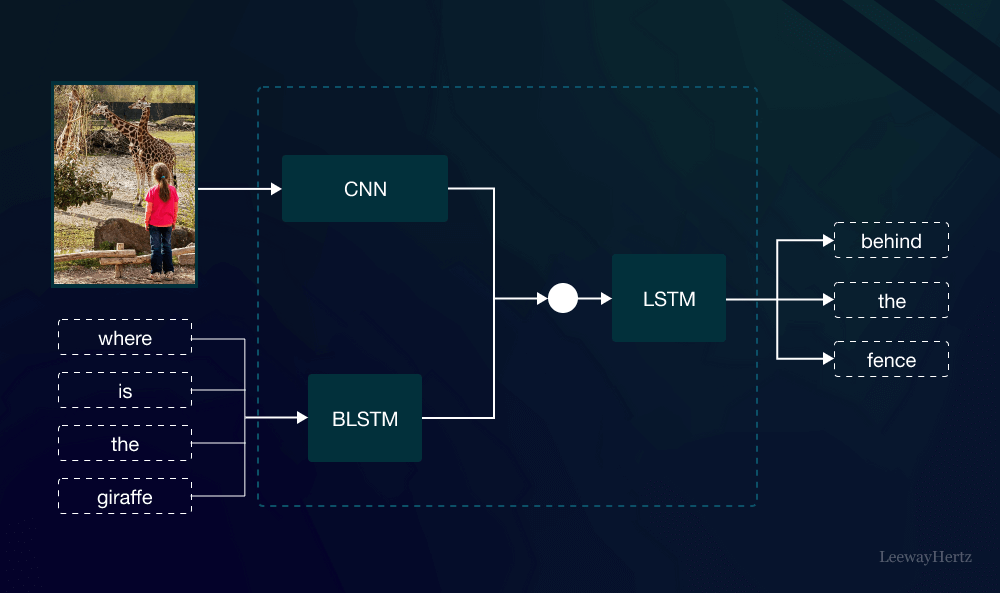

Multimodal models refer to AI systems designed to handle and combine data from diverse modalities or sources. Traditional AI models typically work with a single type of data, such as text or images. However, multimodal models are engineered to process and understand information from different sources concurrently. This capability allows them to provide more nuanced insights and perform complex tasks that single-modality models cannot achieve.

For instance, a multimodal model might analyze a video by interpreting the visual content and the accompanying audio track simultaneously, offering a richer understanding than a model that only processes visual or auditory information alone.

Why Are Multimodal Models Important?

The significance of multimodal models lies in their ability to create more holistic and accurate representations of the world. By integrating various types of data, these models can:

- Enhance Accuracy: Combining data from multiple sources often leads to better accuracy and reliability. For example, in medical imaging, multimodal models can analyze both MRI scans and patient health records to provide a more comprehensive diagnosis.

- Improve Contextual Understanding: Multimodal models can understand context more effectively by correlating information from different modalities. In customer service, for instance, a model that processes both text and voice can better grasp the emotional tone of a conversation.

- Enable Advanced Applications: Multimodal models facilitate complex applications that require the integration of diverse data types. This includes everything from autonomous vehicles that combine camera and sensor data to virtual assistants that understand both speech and visual cues.

Applications of Multimodal Models

The versatility of multimodal models makes them applicable in various fields. Here are some key areas where these models are making a significant impact:

- Healthcare: In healthcare, multimodal models are used to improve diagnostic accuracy. For example, combining genetic data, medical images, and patient history can lead to better predictions and personalized treatment plans.

- Entertainment: In the entertainment industry, multimodal models enhance user experiences. They can analyze both video and audio to generate more engaging content or create sophisticated recommendation systems that understand users’ preferences across multiple formats.

- Autonomous Vehicles: For self-driving cars, multimodal models integrate data from cameras, radar, and lidar sensors to navigate and make real-time decisions. This fusion of information is crucial for safe and efficient vehicle operation.

- Customer Service: Multimodal models are transforming customer service by enabling more effective communication. They can process text, voice, and even facial expressions to better understand and respond to customer needs.

Challenges in Developing Multimodal Models

Despite their advantages, multimodal models present several challenges:

- Data Integration: Combining data from different sources requires sophisticated algorithms and infrastructure. Ensuring that data from various modalities align accurately can be complex.

- Computational Complexity: Processing and integrating multiple types of data demands significant computational resources. This can be a barrier, especially for smaller organizations.

- Data Privacy: With the integration of diverse data sources, ensuring data privacy and security becomes increasingly critical. Multimodal models must be designed with robust privacy measures to protect sensitive information.

The Future of Multimodal Models

As technology continues to advance, the potential of multimodal models is vast. Future developments may include:

- Improved Algorithms: Advances in machine learning algorithms will enhance the capabilities of multimodal models, making them more efficient and accurate.

- Greater Accessibility: With ongoing research, multimodal models are likely to become more accessible to a broader range of industries and applications.

- Ethical Considerations: Addressing ethical concerns related to data use and privacy will be crucial. Future multimodal models will need to balance innovation with responsible data practices.

Conclusion

Multimodal models represent a significant leap forward in the field of artificial intelligence. By integrating and analyzing data from various sources, these models offer enhanced accuracy, contextual understanding, and advanced applications across multiple domains. As technology progresses, the development and deployment of multimodal models will continue to transform industries and improve our daily lives. Embracing these models and addressing their challenges will be key to unlocking their full potential.

Leave a comment