In the ever-evolving landscape of artificial intelligence (AI), hyperparameter tuning has emerged as a crucial element in optimizing machine learning models. This process significantly influences the effectiveness and efficiency of AI systems. In this article, we delve into the impact of hyperparameter tuning on AI, exploring its importance, methodologies, and the ways it can enhance AI performance.

Understanding Hyperparameter Tuning

Hyperparameter tuning refers to the process of adjusting the parameters of a machine learning algorithm that are not learned from the data but are set before the learning process begins. These parameters, known as hyperparameters, control the learning process and model architecture. Examples include the learning rate in gradient descent, the number of hidden layers in a neural network, and the number of clusters in a clustering algorithm.

The impact of hyperparameter tuning on AI is profound. Properly tuned hyperparameters can lead to improved model accuracy, efficiency, and generalization. Conversely, poorly chosen hyperparameters can result in underperformance and suboptimal results. Thus, hyperparameter tuning is a critical step in the AI development pipeline.

The Importance of Hyperparameter Tuning

Hyperparameter tuning directly affects the performance and effectiveness of AI models. By carefully selecting and optimizing hyperparameters, practitioners can enhance the model’s ability to make accurate predictions and generalize well to new, unseen data. This optimization process can lead to several benefits:

- Increased Accuracy: Tuning hyperparameters can lead to significant improvements in model accuracy. For instance, adjusting the learning rate in a neural network can help the model converge more quickly and accurately.

- Better Model Generalization: Proper hyperparameter settings can improve a model’s ability to generalize from training data to real-world scenarios. This means the model performs well not just on the data it was trained on but also on new, unseen data.

- Efficient Training: Effective hyperparameter tuning can make the training process more efficient by reducing the number of iterations required to achieve optimal performance. This can save computational resources and time.

Methods for Hyperparameter Tuning

There are several methodologies used in hyperparameter tuning, each with its advantages and trade-offs. The most common methods include:

- Grid Search: This method involves systematically testing a predefined set of hyperparameter values. While grid search is exhaustive and ensures that all combinations are tested, it can be computationally expensive and time-consuming, especially for large hyperparameter spaces.

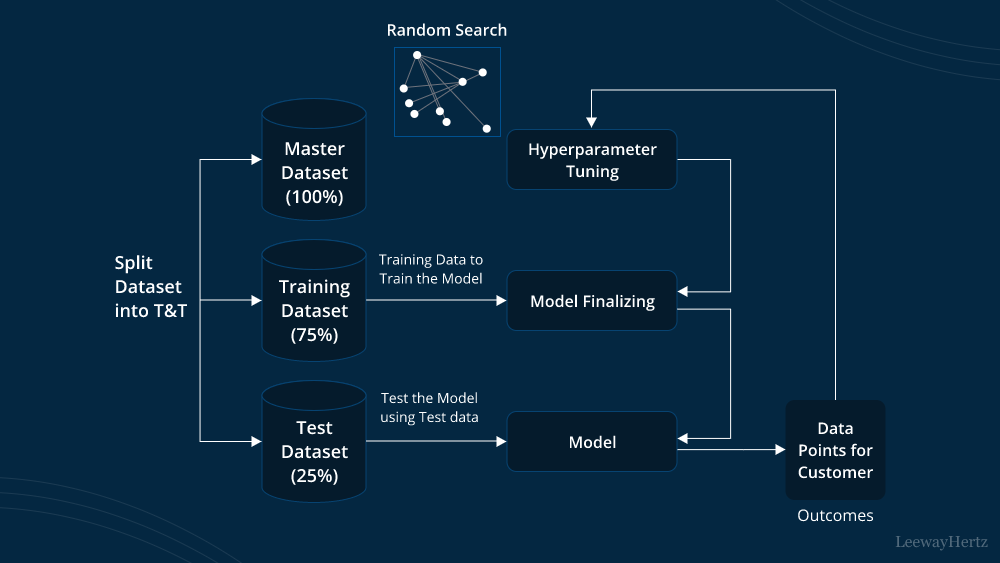

- Random Search: Unlike grid search, random search samples hyperparameter values randomly from a predefined range. This method can be more efficient than grid search as it explores a larger portion of the hyperparameter space in less time.

- Bayesian Optimization: This approach uses probabilistic models to guide the search for optimal hyperparameters. It balances exploration and exploitation by focusing on promising areas of the hyperparameter space, leading to potentially faster and more effective tuning.

- Automated Machine Learning (AutoML): AutoML frameworks offer automated hyperparameter tuning as part of their workflow. These frameworks use advanced algorithms and heuristics to optimize hyperparameters without manual intervention.

Challenges and Considerations

While hyperparameter tuning is essential, it comes with its own set of challenges. Some of the key considerations include:

- Computational Cost: The process of hyperparameter tuning can be resource-intensive, particularly for complex models and large datasets. It requires significant computational power and time, which can be a limiting factor for some practitioners.

- Overfitting Risk: There is a risk of overfitting the hyperparameters to the training data. To mitigate this, it is crucial to use techniques like cross-validation to ensure that the hyperparameters are optimized for generalization rather than just the training data.

- Search Space Exploration: Defining the search space for hyperparameters can be challenging. An excessively large search space can lead to longer tuning times, while a poorly defined space may miss optimal values.

Future Directions

As AI technology continues to advance, hyperparameter tuning is likely to evolve as well. Future developments may include:

- More Efficient Algorithms: Researchers are working on developing more efficient hyperparameter tuning algorithms that reduce computational costs and improve search efficiency.

- Integration with Model Interpretability: Combining hyperparameter tuning with model interpretability techniques may provide deeper insights into how hyperparameters affect model behavior and performance.

- Adaptive Tuning Methods: Adaptive methods that dynamically adjust hyperparameters based on ongoing training performance could lead to more responsive and effective optimization.

Conclusion

The impact of hyperparameter tuning on AI is substantial, influencing the accuracy, efficiency, and generalization of machine learning models. By understanding and applying effective hyperparameter tuning strategies, practitioners can significantly enhance AI system performance. Despite its challenges, ongoing advancements and innovative approaches continue to improve the process, paving the way for more robust and efficient AI solutions. As AI technology evolves, the role of hyperparameter tuning will remain central to achieving optimal outcomes in machine learning applications.

Leave a comment