Building a GPT model can seem daunting, but with the right approach, it becomes manageable. In this article, we’ll break down the essential steps you need to take to create your own Generative Pre-trained Transformer (GPT) model. We’ll cover the necessary components, processes, and considerations to ensure you can successfully navigate this fascinating project.

Understanding the Basics of GPT

Before diving into how to build a GPT model, it’s important to grasp what GPT is and how it functions. A GPT model is a type of artificial intelligence designed to understand and generate human-like text based on the input it receives. It uses a transformer architecture, which excels in handling sequential data, making it ideal for natural language processing tasks.

Components of a GPT Model

To build a GPT model, you need to understand the core components involved:

- Architecture: The transformer architecture is fundamental to GPT. It consists of layers of attention mechanisms that allow the model to focus on different parts of the input text.

- Training Data: Quality training data is crucial. You’ll need a large dataset containing diverse text to train your model effectively.

- Tokenization: This is the process of converting text into tokens that the model can understand. Proper tokenization ensures the model can learn from the data accurately.

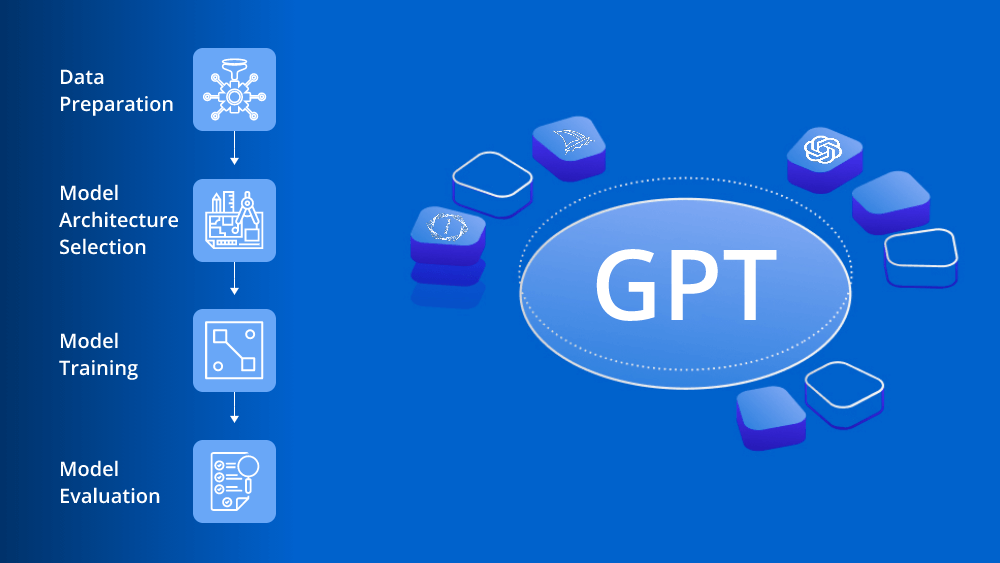

Step-by-Step Guide to Building a GPT Model

Step 1: Gather Your Dataset

The first step in how to build a GPT model is to collect your training data. Look for large datasets that are publicly available and cover a wide range of topics. Common sources include books, articles, and websites. Ensure the data is clean and formatted consistently to facilitate effective training.

Step 2: Preprocess the Data

Once you have your dataset, it’s time to preprocess it. This includes:

- Cleaning: Remove any irrelevant information, such as HTML tags or special characters.

- Tokenization: Convert the text into tokens using a tokenizer compatible with the transformer architecture.

- Normalization: Ensure uniformity in case, punctuation, and other text features.

Effective preprocessing is vital in how to build a GPT model, as it directly impacts the model’s performance.

Step 3: Choose the Right Framework

Select a machine learning framework that supports the transformer architecture. There are several popular frameworks available that make it easier to build and train your model. Look for one that has a strong community and ample documentation, as this will help you troubleshoot issues that may arise.

Step 4: Set Up the Training Environment

Prepare your training environment by ensuring you have the necessary computational resources. Training a GPT model requires significant processing power, so consider using GPUs or cloud-based solutions to accelerate the training process. Install all required libraries and dependencies related to your chosen framework.

Step 5: Configure Model Parameters

When building a GPT model, configuring model parameters is crucial. Key parameters include:

- Number of layers: This affects the model’s depth and complexity.

- Hidden units: The number of neurons in each layer influences the model’s capacity to learn.

- Learning rate: This parameter controls how quickly the model learns from the data.

Experiment with different configurations to find the optimal settings for your specific dataset and requirements.

Step 6: Train the Model

Now comes the exciting part: training your model. Start the training process and monitor its progress. Pay attention to metrics such as loss and accuracy, which indicate how well the model is learning. Be prepared to adjust parameters and retrain as necessary to improve performance.

Step 7: Evaluate the Model

Once training is complete, it’s essential to evaluate the model’s performance. Use a separate validation dataset to test its ability to generate coherent and contextually relevant text. Metrics like perplexity can help quantify how well the model is performing.

Step 8: Fine-Tune the Model

If the evaluation results are not satisfactory, consider fine-tuning the model. This can involve:

- Adjusting hyperparameters: Tweaking learning rates or batch sizes can yield better results.

- Additional training: Training the model on more data or for longer periods can improve its understanding of the language.

Fine-tuning is an integral part of how to build a GPT model effectively.

Deploying Your GPT Model

After successfully building and evaluating your GPT model, the next step is deployment. You can deploy your model as a web application or integrate it into existing systems to enable text generation tasks. Ensure you have a robust API in place to facilitate user interactions with the model.

Conclusion

Building a GPT model is an ambitious but rewarding project. By following these steps—gathering data, preprocessing, configuring parameters, training, and evaluating—you can create a functional language model tailored to your needs. Whether for personal use or to explore innovative applications, understanding how to build a GPT model opens up a world of possibilities in natural language processing. Happy building!

Leave a comment