Introduction to Parameter-efficient Fine-tuning (PEFT)

In the rapidly evolving landscape of machine learning, Parameter-efficient Fine-tuning (PEFT) has emerged as a pivotal strategy for enhancing model performance without the need for extensive computational resources. As deep learning models grow in size and complexity, the demand for efficient training methods has never been greater. PEFT focuses on optimizing pre-trained models, allowing them to adapt to new tasks with minimal parameter adjustments.

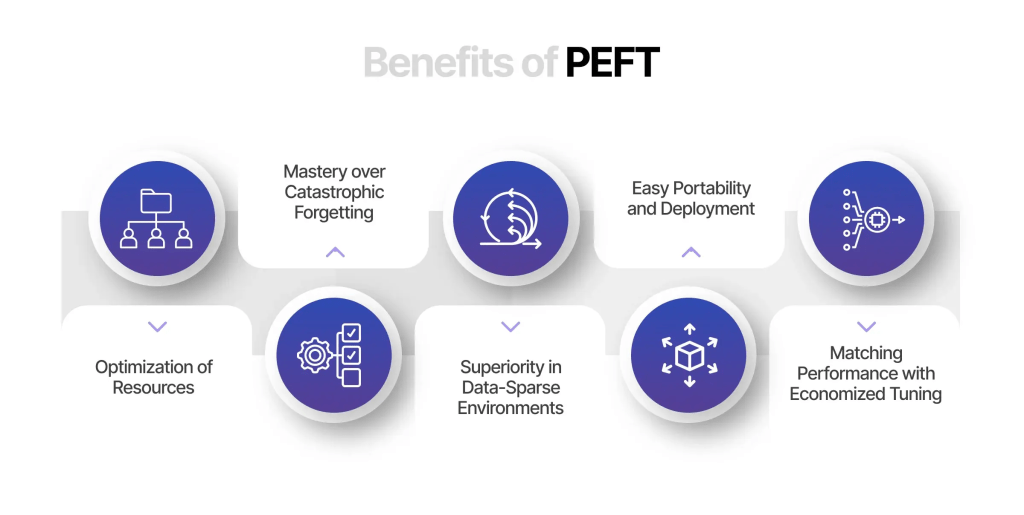

Benefits of Parameter-efficient Fine-tuning (PEFT)

- Reduced Computational Costs

One of the most significant advantages of PEFT is its ability to minimize computational expenses. Traditional fine-tuning often requires retraining entire models, which can be resource-intensive. In contrast, PEFT modifies only a small subset of parameters, leading to faster training times and lower energy consumption. - Faster Training Times

By concentrating on a limited number of parameters, PEFT accelerates the training process. This is particularly beneficial for organizations with limited resources or tight deadlines. With reduced training times, data scientists can iterate more rapidly and achieve results sooner. - Preservation of Knowledge

PEFT allows models to retain their pre-trained knowledge while adapting to new tasks. This is crucial for transfer learning, where leveraging existing model capabilities can lead to better performance on specialized tasks. As a result, models can efficiently utilize their training on large datasets without forgetting valuable insights. - Increased Accessibility

Parameter-efficient Fine-tuning democratizes access to advanced machine learning techniques. Smaller organizations or individual researchers can take advantage of high-performing models without the need for extensive infrastructure or budgets. This opens up opportunities for innovation across various fields.

Techniques in Parameter-efficient Fine-tuning (PEFT)

Several techniques have been developed to implement PEFT effectively. Understanding these methods can help practitioners choose the best approach for their specific needs.

1. Adapter Layers

Adapter layers are a popular PEFT technique. These lightweight layers are inserted into pre-trained models, allowing the model to learn task-specific information while keeping the original parameters frozen. This method is efficient because it requires minimal additional parameters and retains the pre-trained model’s knowledge.

2. Low-Rank Adaptation (LoRA)

Low-Rank Adaptation (LoRA) is another effective PEFT method. It approximates the parameter updates in a low-rank space, significantly reducing the number of parameters that need to be adjusted during training. By leveraging matrix decomposition, LoRA provides a flexible framework for fine-tuning while maintaining computational efficiency.

3. Prompt Tuning

Prompt tuning involves modifying the input prompts that guide the model’s responses. Instead of altering model parameters, this technique focuses on crafting effective prompts that yield better results for specific tasks. This approach can enhance performance without extensive retraining.

4. BitFit

BitFit is an innovative technique that fine-tunes only the bias terms of a model while leaving the other parameters unchanged. This minimalist approach reduces the number of parameters being adjusted, leading to efficient training with minimal computational overhead.

Model Training with Parameter-efficient Fine-tuning (PEFT)

The process of training a model using PEFT techniques involves several key steps:

1. Selecting a Pre-trained Model

The first step is to choose an appropriate pre-trained model that aligns with the desired task. The selection should consider the model’s architecture and its suitability for the specific application.

2. Implementing the PEFT Technique

Once a model is selected, practitioners can implement a suitable PEFT technique, such as adapter layers, LoRA, or prompt tuning. The choice of method will depend on the task requirements and available resources.

3. Fine-tuning the Model

After implementing the chosen PEFT technique, the model can be fine-tuned on the specific dataset. This phase involves adjusting only the selected parameters while keeping the rest of the model intact. It’s essential to monitor performance metrics throughout this process to ensure effective learning.

4. Evaluating Model Performance

Following fine-tuning, the model’s performance should be thoroughly evaluated using relevant metrics. This step helps determine the effectiveness of the PEFT approach and whether further adjustments are necessary.

5. Iteration and Optimization

Model training is often an iterative process. Based on evaluation results, practitioners may need to revisit the PEFT techniques employed, adjust hyperparameters, or select different methods to optimize performance.

Conclusion

Parameter-efficient Fine-tuning (PEFT) represents a transformative approach in the realm of machine learning, providing a pathway to optimize model performance with reduced resource demands. By employing techniques such as adapter layers, LoRA, prompt tuning, and BitFit, practitioners can effectively adapt pre-trained models to new tasks without extensive computational costs. As the field continues to evolve, PEFT is poised to become an essential tool for researchers and organizations seeking to leverage the power of machine learning in a more efficient and accessible manner.

Leave a comment