As AI adoption accelerates across industries, the pressure to build scalable, adaptable, and cost-effective systems is growing. Traditional, one-size-fits-all AI platforms are often too rigid to keep up with rapidly evolving technologies and business needs. That’s why many organizations are turning to a modular AI stack—a next-generation solution designed for flexibility, customization, and streamlined development processes.

Defining a Modular AI Stack

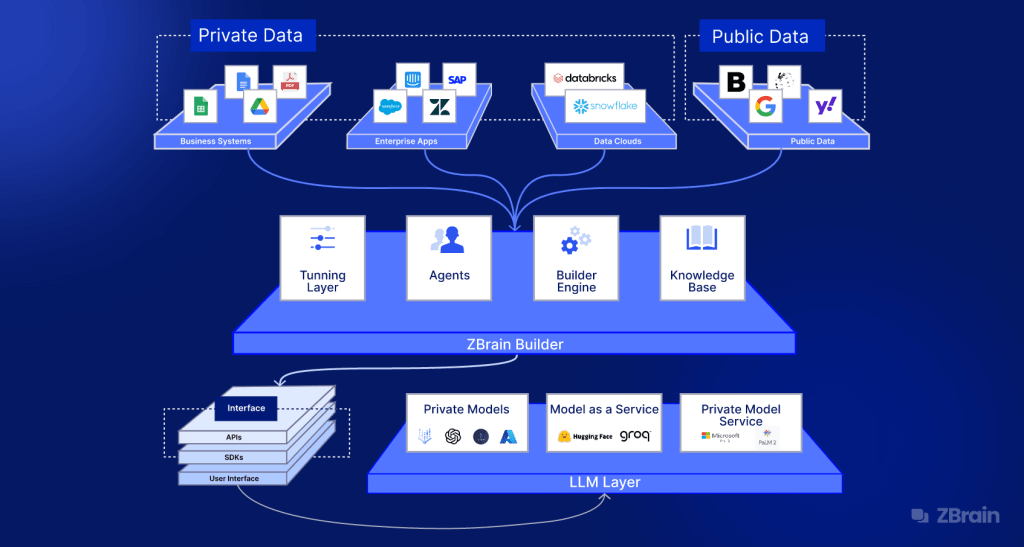

A modular AI stack refers to an architectural approach that divides the AI pipeline into separate, plug-and-play components. Each layer—ranging from data management and model training to deployment and monitoring—can be independently configured and optimized. This approach stands in contrast to integrated, monolithic systems where changes in one component often require modifications throughout the stack.

With a modular structure, teams can pick and choose the tools and technologies that best align with their requirements. This makes the modular AI stack ideal for businesses looking to build tailored AI solutions without being locked into a single ecosystem or vendor.

Why Modularity Matters in AI

The modularity of this stack brings several significant advantages. First, it dramatically increases agility. AI systems built on a modular foundation are easier to upgrade and iterate. When a new tool or model becomes available, it can be swapped in without overhauling the entire infrastructure.

Second, modularity enables a best-of-breed approach. Instead of relying on a single vendor for every layer of the stack, organizations can integrate specialized tools that excel in their respective functions—be it advanced model training, robust data pipelines, or powerful inference engines.

Lastly, the modular AI stack fosters better collaboration. Since each component operates independently, different teams (data science, engineering, DevOps, compliance) can work on their respective modules without stepping on each other’s toes.

Key Building Blocks of a Modular AI Stack

To understand how the modular AI stack works, let’s break down its typical layers:

- Data Collection & Processing: This module handles raw data ingestion, cleansing, transformation, and labeling.

- Model Development: Includes training models using machine learning frameworks.

- Model Serving & Inference: Once trained, models are deployed for inference using platforms that support real-time or batch predictions.

- Workflow Orchestration: Manages the sequence and dependencies of tasks using tools.

- Monitoring & Governance: Offers visibility into model performance, data drift, fairness, and compliance tracking.

- Deployment Infrastructure: Covers the environments where models are deployed, such as containers, edge devices, or cloud services.

Each of these modules can be integrated with APIs or containers, enabling seamless communication and interoperability.

Custom AI Solutions Made Simple

One of the standout benefits of using a modular AI stack is the ease of creating custom AI applications. For instance, a media company working with large volumes of video data can implement a stack optimized for image and audio processing. Meanwhile, a fintech startup might prioritize anomaly detection and real-time transaction analysis.

Rather than trying to retrofit a general-purpose AI platform, organizations can build an architecture that directly maps to their industry requirements, datasets, and compliance needs. This level of customization not only improves performance but also shortens development timelines and reduces costs.

How Modular AI Simplifies Workflow Automation

Building and deploying AI models is often a complex, multi-step process involving various teams. A modular AI stack brings much-needed structure to these workflows by creating clear boundaries between functions. This allows for easier automation of repetitive tasks like data preprocessing, model validation, or CI/CD for machine learning.

Automation becomes more manageable when each module can be triggered, scaled, or updated independently. For example, if a new data source is added, only the ingestion module might need to change. The rest of the stack—from training to inference—remains unaffected. This modularity leads to smoother operations, faster iteration cycles, and fewer bottlenecks.

Scalable and Future-Ready AI Infrastructure

AI technologies evolve rapidly. New techniques, frameworks, and hardware optimizations emerge constantly. A rigid AI stack can quickly become obsolete or require significant reengineering to stay current. In contrast, a modular AI stack supports future growth by allowing individual components to evolve independently.

Need to shift from on-premise to cloud-based model deployment? Simply replace the deployment module. Want to integrate a new LLM or transformer architecture? Just swap out the model training layer. This plug-and-play capability ensures the stack stays relevant and scalable as your needs grow.

Industry Use Cases for Modular AI Stacks

The modular approach to AI is being adopted across multiple industries:

- Healthcare: Custom NLP and image processing modules assist in diagnostics and patient data analysis.

- Retail: Real-time recommendation systems combine customer behavior data with dynamic pricing engines.

- Manufacturing: Predictive maintenance tools integrate with IoT data processing and alerting modules.

- Banking: Modular fraud detection systems offer real-time insights with secure, auditable layers for compliance.

Each use case benefits from the ability to combine high-performance components into a unified, business-specific solution.

Final Thoughts

In today’s fast-moving AI landscape, agility, customization, and operational efficiency are critical. The modular AI stack provides the architectural foundation to meet these demands. By dividing the AI pipeline into independently operable modules, organizations gain the freedom to innovate, automate, and adapt without being bogged down by legacy systems or inflexible platforms.

Whether you’re building your first AI solution or scaling across multiple departments, a modular approach will not only simplify your workflows—it will future-proof your AI strategy.

Leave a comment