Introduction

In the rapidly evolving field of artificial intelligence (AI), securing AI models is of paramount importance. As businesses and organizations increasingly rely on AI for various applications, the need for robust AI model security has never been greater. This article explores why AI model security is crucial and outlines effective strategies to protect AI systems from potential threats.

Understanding AI Model Security

AI model security refers to the measures and practices implemented to protect artificial intelligence models from unauthorized access, tampering, or exploitation. Given that AI models are often used to make critical decisions and handle sensitive data, ensuring their security is essential for maintaining trust and reliability in AI systems.

Why AI Model Security Matters

- Protecting Sensitive Data: AI models frequently process confidential information, including personal data and proprietary business details. Without proper AI model security, this data could be exposed to unauthorized parties, leading to privacy breaches and legal consequences.

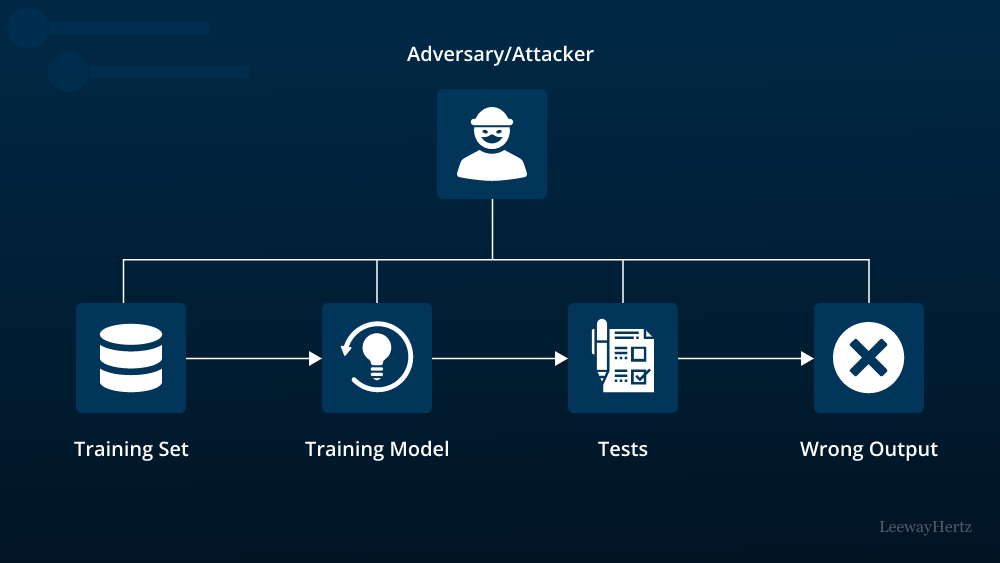

- Preventing Model Manipulation: Attackers may attempt to manipulate AI models to produce biased or incorrect results. Ensuring AI model security helps prevent such tampering and maintains the integrity of the model’s outputs.

- Avoiding Financial Loss: Compromised AI models can result in significant financial losses. For instance, fraud detection models that are not securely protected may be bypassed by malicious actors, leading to substantial financial damage.

Key Strategies for Enhancing AI Model Security

- Data Encryption and Secure Storage To safeguard AI models, it is crucial to use encryption techniques for both the data used to train the models and the models themselves. Data encryption ensures that sensitive information remains confidential, even if intercepted by unauthorized individuals. Secure storage practices, including the use of encrypted databases and access controls, further enhance AI model security.

- Regular Security Audits and Vulnerability Assessments Conducting regular security audits and vulnerability assessments is essential for identifying potential weaknesses in AI models. These evaluations help detect and address security issues before they can be exploited by malicious actors. Regular updates and patches should be applied to fix any vulnerabilities discovered during these assessments.

- Robust Access Controls and Authentication Implementing strict access controls and authentication mechanisms is vital for protecting AI models. Access should be restricted to authorized personnel only, and multi-factor authentication can add an extra layer of security. This approach helps prevent unauthorized access and reduces the risk of insider threats.

- Adversarial Training and Testing Adversarial training involves exposing AI models to various types of attacks during the training process. By simulating potential threats, models can be better prepared to handle real-world adversarial scenarios. Additionally, regular testing for adversarial attacks helps identify and mitigate vulnerabilities, enhancing overall AI model security.

- Model Hardening Techniques Model hardening involves applying various techniques to make AI models more resilient to attacks. This may include methods such as adding noise to inputs to prevent model inversion attacks or using techniques to ensure models are robust against perturbations. Hardening strategies help strengthen AI model security by reducing the likelihood of successful attacks.

- Monitoring and Incident Response Continuous monitoring of AI models is crucial for detecting unusual activities or potential security breaches. Implementing real-time monitoring systems allows for the early detection of anomalies and swift response to potential threats. Having a well-defined incident response plan in place ensures that appropriate actions are taken promptly in the event of a security incident.

- Collaboration and Knowledge Sharing Collaboration within the AI community is essential for advancing AI model security. Sharing knowledge and best practices helps organizations stay informed about emerging threats and effective countermeasures. Engaging with industry groups, participating in conferences, and contributing to research efforts can enhance collective AI model security.

Conclusion

In the landscape of artificial intelligence, AI model security is a critical concern that must be addressed proactively. By implementing strategies such as data encryption, regular security audits, robust access controls, adversarial training, model hardening, continuous monitoring, and community collaboration, organizations can effectively safeguard their AI models from potential threats. Prioritizing AI model security not only protects sensitive data and prevents financial losses but also ensures the reliability and trustworthiness of AI systems in various applications. As AI technology continues to advance, maintaining strong security measures will be essential for securing the future of artificial intelligence.

Leave a comment