Generative AI represents one of the most transformative advancements in artificial intelligence, enabling machines to create new content, from text and images to music and code. To harness the full potential of generative AI, a comprehensive understanding of its tech stack is crucial. This article delves into the key components of the generative AI tech stack, including frameworks, infrastructure, models, and applications, providing a clear and accessible overview for those interested in this cutting-edge field.

Frameworks: Building Blocks of Generative AI

At the core of the generative AI tech stack are frameworks, which provide the essential tools and libraries for developing and deploying generative models. These frameworks offer pre-built functionalities, making it easier for developers to design and train sophisticated AI systems.

1. TensorFlow and PyTorch:

TensorFlow and PyTorch are two of the most widely used frameworks in the generative AI landscape. TensorFlow, developed by Google, provides a robust platform with extensive support for deploying models across various environments, including mobile and web applications. PyTorch, developed by Facebook’s AI Research lab, is known for its dynamic computation graph and ease of use, which simplifies the development and experimentation process for generative models.

2. Hugging Face Transformers:

Hugging Face Transformers is another critical framework that focuses on natural language processing (NLP). It offers a collection of pre-trained models and tools for implementing state-of-the-art NLP tasks, including text generation, translation, and summarization. The ease of integration and vast model repository make it a valuable asset in the generative AI tech stack.

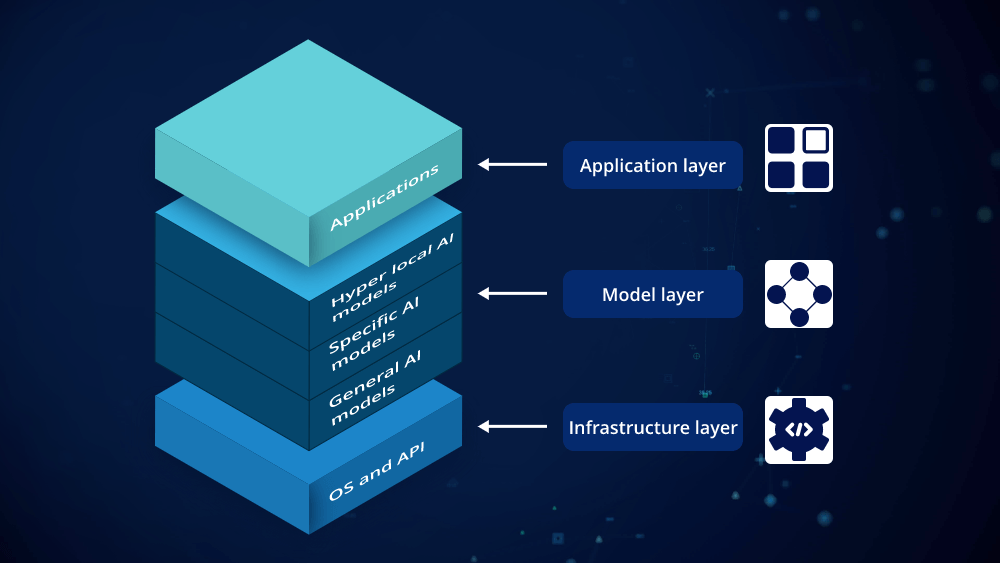

Infrastructure: Supporting Generative AI Models

The infrastructure supporting generative AI is crucial for handling the large-scale computations required for training and deploying models. This includes hardware, cloud services, and specialized platforms.

1. GPUs and TPUs:

Generative AI models, especially deep learning models, require substantial computational power. Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs) are designed to accelerate the training process. GPUs, with their parallel processing capabilities, are commonly used in training deep neural networks. TPUs, developed by Google, are optimized for tensor operations and provide enhanced performance for machine learning tasks.

2. Cloud Computing Platforms:

Cloud computing platforms like AWS, Google Cloud, and Microsoft Azure offer scalable resources for running generative AI models. These platforms provide powerful virtual machines and managed services that support high-performance computing, storage, and data management. Cloud-based solutions enable organizations to access state-of-the-art infrastructure without investing in expensive hardware.

3. Data Management Tools:

Effective data management is essential for training generative models. Tools for data storage, processing, and retrieval play a significant role in the generative AI tech stack. Technologies such as Apache Hadoop and Apache Spark facilitate the handling of large datasets, ensuring that the models have access to high-quality data for training.

Models: The Heart of Generative AI

Generative models are at the heart of generative AI, enabling the creation of new content based on learned patterns from data. Several types of generative models are widely used in the industry.

1. Generative Adversarial Networks (GANs):

GANs consist of two neural networks—a generator and a discriminator—that compete with each other. The generator creates new data instances, while the discriminator evaluates their authenticity. This adversarial process improves the quality of generated content, making GANs effective for tasks such as image generation and style transfer.

2. Variational Autoencoders (VAEs):

VAEs are probabilistic models that learn to encode input data into a latent space and then decode it back to the original form. They are useful for tasks such as image generation, anomaly detection, and data reconstruction. VAEs provide a structured approach to learning latent representations, which enhances the quality of generated outputs.

3. Transformer Models:

Transformers, such as GPT (Generative Pre-trained Transformer) models, have revolutionized natural language processing. These models use attention mechanisms to process and generate text, enabling applications such as text generation, translation, and summarization. Transformer models are known for their scalability and effectiveness in handling large-scale text data.

Applications: Real-World Impact of Generative AI

The generative AI tech stack is applied across various domains, showcasing its versatility and potential.

1. Content Creation:

Generative AI is transforming content creation by automating tasks such as writing articles, generating artwork, and composing music. Tools powered by generative models can assist writers, artists, and musicians by providing inspiration or generating initial drafts, significantly speeding up the creative process.

2. Healthcare:

In healthcare, generative AI models are used to create synthetic medical data for research, develop personalized treatment plans, and simulate drug interactions. By generating realistic patient data, researchers can test new treatments and improve healthcare outcomes.

3. Gaming and Entertainment:

Generative AI is enhancing gaming and entertainment by creating realistic environments, characters, and narratives. AI-driven content generation enables the development of immersive experiences and dynamic storytelling, offering new possibilities for game developers and content creators.

4. Data Augmentation:

Generative models are also employed in data augmentation, where they generate additional data to improve the performance of machine learning models. This is particularly useful in scenarios with limited data, helping to enhance model accuracy and robustness.

Conclusion

The generative AI tech stack encompasses a range of frameworks, infrastructure, models, and applications, each playing a crucial role in the development and deployment of generative AI systems. By understanding these components, organizations and developers can effectively harness the power of generative AI to create innovative solutions and drive advancements across various fields. As technology continues to evolve, staying informed about the latest developments in the generative AI tech stack will be essential for leveraging its full potential.

Leave a comment